Of all the new features and capabilities currently available with Azure Analysis Services, dynamic scaling up/down and in/out are my 2 favorite.

-

- Scaling Up refers to adding more (CPU and/or memory) resources

- Scaling Out refers to adding more servers

Together these features allow you to adjust resources to match changes in workload with only a few clicks of a mouse. These features also eliminate the risks associated with having to estimate resource requirements and purchase hardware with enough horsepower for the next few years.

In this post I’ll show how to determine if/when it is time to scale and which direction to scale.

When to Scale?

It is time to scale when you’ve maxed out your system resources and still need to increase your throughput. In other words, you need to do more work in the same amount of time or the same amount of work in less time… and you want to do it without making code changes.

With that in mind, determining when to scale comes down to detecting a resource bottleneck… which for Azure AS tabular models means Memory and/or CPU bottlenecks.

Memory Bottlenecks

Detecting a memory bottleneck is as straight forward as it gets…all you need to do is put a check by the following metrics in the Azure portal…

- [Memory: Memory Usage]

- [Memory: Memory Limit High]

- [Memory: Memory Limit Hard]

If the [Memory: Memory Usage] line hits the [Memory: Memory Limit High] you have memory pressure. If it crosses the [Memory: Memory Limit High] and approaches the [Memory: Memory Limit Hard] line, you have severe memory pressure and need to take action. When the [Memory: Memory Usage] line hits the [Memory: Memory Limit Hard] line you will get an error.

In the screenshot above, the [Memory: Memory Usage] line is well below the [Memory: Memory Limit High] line, which means we’re far from a memory bottleneck.

CPU Bottlenecks

Detecting a CPU bottleneck can a bit more involved, but a good place to start is by tracking the [QPU] metric and comparing it to the QPU-limit for the SKU.

Note: a QPU stands for query processing unit which is an abstraction of CPU… 100 QPUs are roughly equivalent to 5 “pretty fast” CPU cores.

Below is a quick look at the [QPU] metric for my developer instance:

This is a D1 instance with a limit of 20 QPUs. The peak in the chart above is ~6.25… so I’ve got plenty of QPUs to spare. However, if that line was pegged at 20 (or close to it) for a period of time, that would indicate I have a a CPU bottleneck and likely have queries that are queuing up waiting for available resources to execute. This could be confirmed by reviewing the [Threads: Query pool job queue length] metric which shows the number of queries waiting on a thread from the query thread pool to start executing.

For processing workloads, in addition to the [QPU] metric, you will want to keep an eye on the [Processing Pool Job Queue Length] metric. If this metric has a value > 1 for a sustained period of time, it means there is processing tasks that can’t start because all available threads in the processing thread pool are in use.

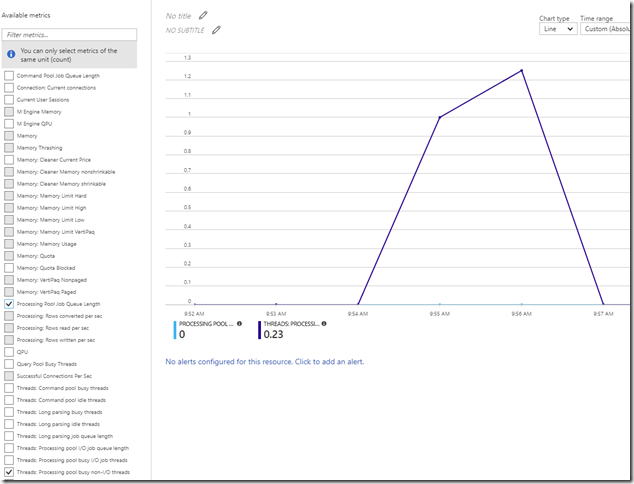

The purple line in the screenshot below is the [Threads: Processing pool busy non-I/O threads] metric showing active CPU threads doing work during processing. The [Processing Pool Job Queue Length] metric is in light blue and hugs the x-axis because there isn’t a CPU thread bottleneck.

Which direction to scale: Up or Out?

So we know we need to scale, but in which direction?

For processing workloads, scaling UP is the only option. You cannot scale OUT of resource bottlenecks during processing workloads.

For query workloads, you’ll want to take the following items into consideration…

- Processing Window

Are you processing the model during business hours and/or at times that could negatively impact reporting/query performance? If the answer is yes, then you should favor scale-out over scale-up… as scaling-out allows the separate of processing from querying. - Query Concurrency

This one is best explained with an example. Given a model running on an S2 instance that can consistently sustain a reporting/query concurrency of 40… that same model should be able to sustain 2x the concurrency if run on an S4 instance (scale-up) or if run on 2x S2 instances (scale-out). This is because query concurrency is tightly correlated with CPU/QPU. However, there’s a hard limit in the scale-up direction… currently that limit is an S9 instance w/ 640 QPUs. Building from the current example, where we can sustain 40 concurrent queries with an S2, 80 concurrent queries with an S4… we tap out at 128 concurrent queries w/ an S9. If we still need to increase concurrency, there is no other option but to scale-out.

Outside of those considerations above, it doesn’t really matter.

Additional Resources

3 replies on “When to Scale-Up or Scale-Out with Azure Analysis Services”

[…] When to Scale-Up or Scale-Out with Azure Analysis Services (@SQLbyoBI) […]

LikeLike

Does the aggregation needs to be max or avg to check if there is a QPU(CPU) bottleneck

LikeLike

I use both.

Max will tell you every single instance where the bottleneck was hit no matter how small (in terms of duration). Average can sometimes be misleading as it won’t show spikes. That said, depending on the SLA requirement, you may decide not to scale up/out if MAX is showing spikes of bottleneck but AVERAGE looks ok.

LikeLike